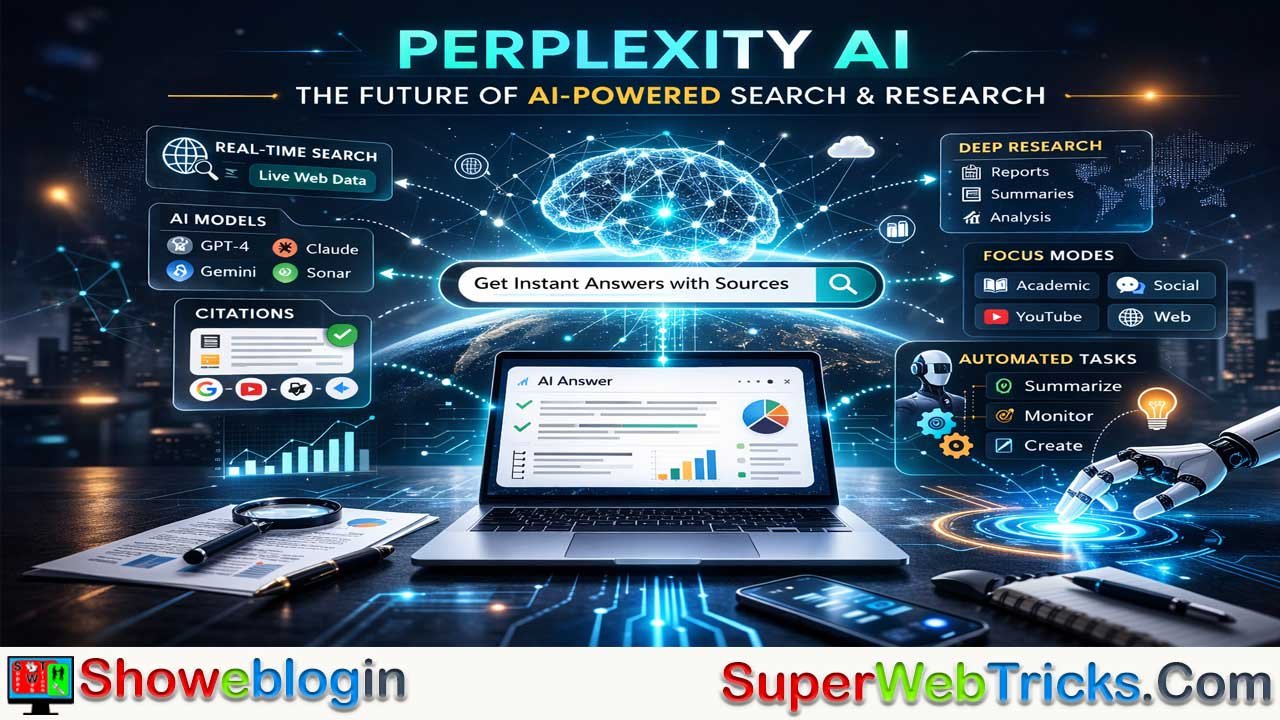

The modern internet is shifting from traditional search engines to AI answer engines. Perplexity is a platform that combines live internet search with advanced AI models. Instead of giving links, it provides clear answers with sources.

It uses a system called Retrieval-Augmented Generation (RAG) to fetch real-time data and reduce errors. The platform can use different AI models depending on the task. It also offers tools like Pro Search, Deep Research, and Focus Modes for better results.

Features like Spaces, Pages, and Tasks help organize research and automate updates. New tools such as Create, Computer, and the Comet browser allow AI to perform tasks and build projects. Overall, Perplexity works as an intelligent research assistant that helps users find, verify, and use information quickly.

| Aspect | Information |

|---|---|

| Platform Name | Perplexity AI |

| Core Function | AI-powered answer engine that provides direct answers with citations |

| Key Technology | Retrieval-Augmented Generation (RAG) with real-time web search |

| Main Purpose | Fast research, verified information, and knowledge synthesis |

| AI Models Used | Multiple models from OpenAI, Anthropic, Google, xAI, and Perplexity’s Sonar |

| Research Modes | Pro Search, Deep Research, and conversational search |

| Focus Modes | Web, Academic, Social, YouTube, Wolfram Alpha, Writing |

| Productivity Tools | Spaces, Pages, Tasks, and Watchlists |

| Agentic Features | Perplexity Create and Perplexity Computer for autonomous tasks |

| Browser Integration | Comet AI browser with built-in assistant |

| Developer Access | Sonar Search API for apps and AI agents |

| Pricing Model | Freemium with Free, Pro, and Enterprise tiers |

Perplexity AI Explained: The Future of AI-Powered Answer Engines and Research

The contemporary digital ecosystem is undergoing a radical structural reorganization, characterized by a transition from traditional, link-based search architectures to sophisticated, agentic answer engines. At the center of this metamorphosis is Perplexity, a platform that synthesizes the real-time breadth of the global internet with the advanced cognitive reasoning of frontier large language models.

Unlike the legacy search paradigm, which serves primarily as a directory of pointers to external documents, the system described here functions as an intelligent research assistant designed to navigate, process, and distill information into immediately actionable intelligence. This report provides an exhaustive technical and strategic evaluation of the platform, examining its underlying Retrieval-Augmented Generation (RAG) framework, its tiered service infrastructure, and its expansion into agentic browsing and developer services.

The Paradigm Shift in Information Retrieval

The foundational challenge of the modern internet is no longer the availability of data, but rather the efficiency of its discovery and the verification of its accuracy. Traditional search engines have increasingly become “digital yellow pages,” where user intent is often subordinated to the commercial imperatives of the “clicks and traffic” model. In contrast, the emergence of the “answer engine” model prioritizes direct, synthesized knowledge backed by verifiable evidence.

By integrating live web search with multiple leading AI models, the platform under analysis provides up-to-date answers that eliminate the need for users to manually sift through dozens of blue links.

The Technical Foundations of Accuracy

The operational integrity of the platform is predicated on a sophisticated implementation of Retrieval-Augmented Generation (RAG). This mechanism ensures that the large language model (LLM) does not rely solely on its static, pre-existing training data, which is subject to knowledge cut-off dates and the risk of hallucination.

Instead, the system initiates a real-time search pipeline that queries the open web, academic databases, and specialized indexes to retrieve the most current information available. These retrieved “snippets” are then scored, ranked, and passed to the LLM as context, which uses this grounded data to generate a response that is both conversational and empirically cited.

| Metric Category | Traditional Search Engine | Perplexity Answer Engine |

|---|---|---|

| Primary Output | Ranked list of external URLs | Synthesized, natural-language response |

| Cognitive Load | High (User must read and synthesize) | Low (System performs synthesis) |

| Verification Method | Manual clicking and scanning | Inline citations with one-click source preview |

| Knowledge State | Static (until index update) | Real-time (live web retrieval) |

| Contextual Awareness | Low (keyword-based) | High (conversational memory and intent) |

Multi-Model Orchestration and the Model Council

A strategic differentiator of this platform is its model-agnostic architecture. Rather than developing a single proprietary model to the exclusion of others, the system operates as a “harness” that orchestrates various frontier models from providers such as OpenAI, Anthropic, and Google.

This “Model Council” approach allows the system to deploy the most appropriate computational resource for a specific task—utilizing lightweight models for fast factual retrieval and more powerful reasoning engines for complex, multi-step research.

Model Specialization and Selection Logic

For subscribers of the premium tiers, the ability to manually switch between backend models provides a level of control that is essential for professional-grade research. Each frontier model brings unique strengths to the discovery process. For example, Anthropic’s Claude 3.5 Sonnet is frequently favored for its nuanced synthesis and writing style, while OpenAI’s GPT-4o is valued for its broad analytical capabilities and long-context recall. The system’s proprietary model, Sonar, is specifically optimized for low-latency web search and fast factual lookups.

The orchestration layer is capable of managing “sub-agents” that execute different parts of a single workflow simultaneously. In an advanced research session, one sub-agent might conduct deep web research while another processes document data or generates visual assets. This coordination is automatic and asynchronous, allowing the system to maintain a “Perplexity velocity” that would be impossible for a human researcher to replicate manually.

Comparative Model Strengths in Research Workflows

| Model Provider | Specific Model Variant | Primary Research Strength |

|---|---|---|

| Anthropic | Claude 3.5 Sonnet / 4.0 | Synthesis, writing tone, and nuanced reasoning |

| OpenAI | GPT-4o / GPT-5 | Logical deduction, long-context recall, and general analysis |

| Gemini 3.0 Pro / Ultra | Large-scale data processing and chart generation | |

| xAI | Grok 4 | Real-time trend analysis and speed in lightweight tasks |

| Perplexity | Sonar (Llama-based) | Low-latency factual search and citation accuracy |

Functional Modalities: From Search to Deep Research

The platform provides several distinct modes of interaction, each tailored to a different level of investigative depth. This tiered functionality allows the system to act as both a quick factual reference and a comprehensive research partner.

Pro Search and Iterative Dialogue

Pro Search represents the evolutionary step beyond standard keyword searching. It functions as a conversational guide that engages with the user to fine-tune its research strategy. Instead of providing immediate, generic results, Pro Search may ask clarifying questions to ensure it understands the nuances of the query.

Once the intent is established, it conducts thorough research across multiple sources, delivering a well-organized response that anticipates follow-up needs. This iterative process is designed to mimic the interaction one might have with a human research assistant, where the dialogue itself helps to refine the knowledge discovery path.

Deep Research and Autonomous Synthesis

The “Research” mode (formerly known as Deep Research) is the platform’s most powerful investigative tool. It employs a framework that mimics human cognitive processes through iterative analysis cycles. When a user initiates a Research query, the system performs dozens of automated searches, screens hundreds of potential sources, and reasons through the material autonomously.

This process, which typically takes between 2 to 4 minutes, results in a comprehensive, structured report with logical sections and extensive citations. This mode is particularly effective for strategic planning, academic literature reviews, and market intelligence, where the volume of information would otherwise be overwhelming for a human to synthesize in a single session.

Focus Modes: Contextual Filters for Precision Retrieval

To further enhance the relevance of its outputs, the system utilizes “Focus Modes,” which allow users to specify the domain of their search. These modes act as smart filters that guide the AI in terms of which datasets to prioritize and what tone to adopt in its responses.

Domain-Specific Search Strategies

Focus modes are essential for reducing “noise” and avoiding hallucinations by grounding the AI in specialized, high-quality sources. For instance, “Academic Mode” focuses exclusively on peer-reviewed research and scholarly papers, providing structured answers with citations suitable for students and educators. Conversely, “Social Mode” prioritizes discussions on platforms like Reddit and X (Twitter), allowing users to tap into real-time sentiment, public reviews, and emerging trends.

| Focus Mode | Data Source Emphasis | Recommended Use Cases |

|---|---|---|

| Web | Entire indexed internet | General research, news, product comparisons |

| Academic | Scholarly journals, research papers | Students, researchers, and technical reviews |

| Social | Reddit, X, public forums | Sentiment analysis, user reviews, social trends |

| YouTube | Video transcripts and metadata | Summarizing long-form video content or briefings |

| Wolfram Alpha | Computational knowledge engine | Mathematical solutions, data visualization, physics |

| Writing | Pure LLM (no web search) | Generating, editing, or polishing existing text |

The “YouTube” focus mode is a particularly significant innovation for professional content analysis. By accessing video transcripts and metadata, it can generate comprehensive summaries of long-form videos, often including timestamps and key takeaways, which allows users to grasp the core message of a video without watching it in its entirety. This represents a major shift in how multimedia data is consumed and summarized for professional workflows.

Tiered Subscription Infrastructure and Accessibility

The platform operates on a freemium model, offering a robust baseline service while reserving advanced models and higher usage limits for its premium tiers. This structure allows the platform to serve casual users while providing a professional-grade research environment for power users and organizations.

Comparison of Service Tiers

| Feature | Free (Standard) Plan | Pro (Individual) Plan | Max Plan |

|---|---|---|---|

| Monthly Cost | $0 | $20 | $200 (Historical/Special) |

| Daily Pro Searches | ~3 to 5 | 300+ | Highest Priority |

| Deep Research Credits | 1 per month (approx) | ~500 per month | Unlimited/High Rate |

| Model Flexibility | Automatic selection | Manual choice of GPT-4, Claude, etc. | Latest frontier access |

| File Uploads | Basic / Limited | Unlimited (PDF, CSV, Image) | Expanded Context |

| Image Generation | No access | Included | Advanced creative tools |

| Support | Standard Help Center | Priority (2 business day response) | Dedicated / VIP |

The “Education Pro” tier provides an important middle ground, offering a significant discount ($10/month) for verified students and educators. This plan includes 10x as many citations in answers and access to “Study Mode,” which can generate interactive flashcards and quizzes from research materials. For organizations, “Enterprise Pro” adds management features such as seat administration, higher rate limits, and compliance tooling, making it suitable for collaborative research in regulated data environments.

Productivity Ecosystem: Spaces, Pages, and Tasks

To transition from a simple “answer engine” to a comprehensive productivity platform, the system has integrated several features designed for project management and knowledge organization.

Perplexity Spaces: Collaborative Research Hubs

Spaces are dedicated workspaces that allow individuals and teams to organize their research more effectively. Within a Space, users can manage up to 50 files, including PDFs, DOCX files, and spreadsheets. These files can be cross-referenced with live web data, allowing the AI to summarize complex reports, identify outliers in datasets, or extract key arguments from multiple academic papers simultaneously. The collaborative nature of Spaces—where contributors can be invited and privacy settings customized—makes them ideal for team-based projects like proposal preparation or market analysis.

Perplexity Pages: From Research to Publication

A significant bottleneck in professional research is the transition from gathering data to presenting findings. “Perplexity Pages” addresses this by automatically generating structured, report-like content from user queries. These Pages add visual structure, headings, and images, and they can be exported to PDF or Markdown for external use. The “Discover” feed is a curated version of this capability, where editor-selected Pages on trending topics are shared with a global audience, allowing users to build credibility as curators and researchers.

Tasks and Watchlists: Automated Information Monitoring

For users who need to stay updated on rapidly evolving topics, the “Tasks” and “Watchlists” features provide automated, background monitoring. Tasks allow users to schedule recurring alerts (e.g., a daily summary of top news in a specific industry), while Watchlists work 24/7 to notify users of breaking news or price changes instantly. These outputs can be delivered via email or push notifications, effectively turning the platform into a passive information-gathering system that alerts the user only when relevant new data is discovered.

The Agentic Shift: Perplexity Create and Perplexity Computer

The platform is increasingly moving beyond information synthesis toward autonomous execution—a field known as agentic AI. This shift is characterized by the system’s ability to not only answer questions but also perform real-world tasks on behalf of the user.

Perplexity Create (formerly Labs)

“Perplexity Create files and apps” is a creation engine that leverages tools like deep web browsing and code execution to turn natural language prompts into tangible deliverables. This tool can invest significant time—often 30 minutes or more of self-supervised work—to assemble information and build complex projects such as interactive dashboards, spreadsheets, or even simple web applications using HTML, CSS, and JavaScript. This marks a transition from “answering” to “producing,” where the AI acts as a productivity booster that handles the assembly and formatting of information into functional outputs.

Perplexity Computer: The Autonomous Software Worker

Perplexity Computer represents the frontier of the platform’s agentic ambitions. It operates the software stack in a manner analogous to a human co-worker, capable of reasoning, delegating, and executing complete workflows that can run for hours. When given a desired outcome, Perplexity Computer breaks it into subtasks and creates sub-agents for execution. These agents can navigate websites, interact with APIs, process data, and even code their own tools if a problem is encountered. This system provides a safe harness for powerful AI, running in an isolated compute environment with access to a real filesystem and browser.

The Comet Browser: An AI-Native Web Interface

The release of the Comet browser represents a strategic attempt to integrate AI discovery directly into the browsing experience, rather than treating it as a separate destination. Built on the Chromium foundation, Comet maintains compatibility with Chrome extensions while replacing the traditional “passive” browsing experience with an “agentic” one.

Key Features of the Comet Architecture

Comet differs from traditional browsers in its fundamental interface; the central URL bar is replaced by a dominant prompt box, signaling that the primary way to interact with the web is through questioning rather than navigation. The “Comet Assistant” travels with the user across the web, providing real-time summaries of articles, comparing prices across open tabs, and even drafting emails or managing calendars.

| Comet Browser Feature | Functional Impact | Workflow Benefit |

|---|---|---|

| Multi-Tab Intelligence | Understands and links all open tabs simultaneously | Eliminates context switching; allows cross-source synthesis |

| Agentic Execution | Can click links, fill forms, and make purchases | Automates repetitive digital chores and logistics |

| Background Assistants | Runs tasks asynchronously in the background | Increases throughput; tasks complete while user focuses elsewhere |

| Saved Shortcuts | Reusable multi-step commands triggered by “/” | Standardizes workflows like /tldr or /email-draft |

| Voice Interface | Supports natural conversation with the assistant | Allows hands-free research and navigation |

The significance of Comet lies in its ability to “chat with your tabs.” This feature allows a user to ask questions about any open tab or even compare data across three different articles simultaneously. This “semantic search” for a temporary browsing session removes the burden of manual reading and synthesis, effectively transforming the browser into a high-speed engine for getting things done.

Developer Platform and the Sonar Search API

For developers and enterprises, the platform offers programmatic access to its search infrastructure through the “Sonar” API. This allows external applications to leverage the same real-time web indexing and RAG pipeline that powers the public answer engine.

API Architecture and Performance

The Sonar API is built for the unique demands of modern AI workloads. Unlike traditional search APIs that return full pages, Sonar delivers ranked snippets and sub-document chunks that are already scored against the query. This allows for faster integration and more valuable downstream results for AI agents. The API platform also includes a software development kit (SDK) and an open-source evaluation framework called “search_evals,” enabling researchers to rigorously test the quality and latency of the search output.

| API Technical Spec | Detail / Benefit |

|---|---|

| Retrieval Stack | Low-latency stack with multi-stage re-ranking |

| Index Freshness | Processes tens of thousands of update requests per second |

| Response Format | Rich structured responses ready for AI agents |

| Optimization | Speculative decoding and disaggregated prefill |

| Privacy | No data logging or storage for API queries |

Companies like Zoom and Apollo have already integrated this API to supercharge their AI companions, allowing them to provide up-to-the-minute information and clinical or professional answers that are empirically cited.

Privacy, Security, and the Ethics of Information

As an information-gathering tool, the platform must balance its data collection practices with user privacy and security. The system acts as a data-collecting platform, tracking user activity to personalize interactions and improve its engine. However, it offers several safeguards, particularly for its premium and enterprise users.

Data Protection Measures

Premium subscribers (Pro, Max, and Enterprise) have the ability to opt out of having their data used for AI model enhancement. For Enterprise customers, the platform asserts that user data is never used for training models. Furthermore, the system complies with international privacy standards such as GDPR, HIPAA, and CCPA, requiring data minimization and anonymization. Security audits are conducted regularly, and an “Incognito” mode is available to limit data sharing with external AI services during sensitive research sessions.

Mitigating the Risk of Hallucinations

The platform’s primary strategy for ensuring factual accuracy is its mandatory inclusion of real-time citations. By forcing the LLM to generate responses only from a specified, high-quality set of retrieved documents, the risk of describing nonexistent events or legal cases is drastically reduced. This “trust and factuality” model positions the tool as an “anti-hallucination mechanism” for professional users who cannot afford the inaccuracies often associated with pure generative models.

Strategic Implementation and Prompting for Power Users

To extract the maximum value from an answer engine, users must shift their mental model from “navigating” to “thinking and delegating”. This requires a new set of best practices in prompting and workflow management.

Advanced Prompting Strategies

Unlike traditional LLMs, the web search models used in this system require specific contextual cues to guide their retrieval process. Adding just two or three extra words of context can dramatically improve performance. For example, instead of asking “Tell me about climate models,” a power user would prompt: “Explain recent advances in climate prediction models for urban planning”.

| Prompting Principle | Application | Rationale |

|---|---|---|

| Specificity over Breadth | Add context (dates, industries, roles) | Guides the search engine toward relevant niches |

| Avoid Few-Shot | Do not provide examples within the prompt | Prevents the AI from searching for your examples |

| Expert Terminology | Use terms that would appear in authoritative papers | Triggers retrieval from high-quality sources |

| Conditional Language | Instruct the model to say “I don’t know” | Reduces the pressure to hallucinate when data is missing |

Power users are also encouraged to set up “Detailed Profiles,” which allow the system to tailor its expertise level, terminology, and analogies to the user’s specific industry and role. By configuring these settings and utilizing specialized Spaces for different projects, the system becomes a personalized knowledge partner that grows more effective over time.

Conclusion: The Future of Agentic Discovery

The analysis of the Perplexity ecosystem reveals a platform that is rapidly evolving from a sophisticated search engine into a comprehensive, agentic research and execution environment. By orchestrating multiple frontier AI models and grounding them in real-time web data, the system effectively addresses the dual challenges of information entropy and artificial intelligence hallucinations.

The introduction of the Comet browser and the agentic capabilities of Perplexity Computer suggest a future where the distinction between “searching for information” and “executing a task” becomes increasingly blurred.

For professional users, the strategic implication is a significant reduction in the manual labor of knowledge work. The ability to autonomously synthesize hundreds of sources into a cited report, build mini-apps from natural language, and manage complex workflows across multiple tabs allows for a level of productivity that was previously unattainable.

As the platform continues to refine its “Model Council” and expand its developer API, it is positioned to remain at the vanguard of the next generation of intelligent information discovery systems. The ultimate value of the system lies in its mission to “power the world’s curiosity,” transforming the broken, commercialized internet back into a tool for genuine exploration and discovery.

FAQs about Perplexity AI

What is Perplexity AI?

Perplexity AI is an AI-powered answer engine that combines real-time web search with advanced language models to provide direct, cited answers instead of a list of links.

How is Perplexity different from traditional search engines?

Traditional search engines show ranked lists of websites, while Perplexity summarizes information and gives clear answers with sources, reducing the need to open many pages.

What technology powers Perplexity AI?

Perplexity uses Retrieval-Augmented Generation (RAG), which retrieves real-time information from the web and uses AI models to generate accurate responses based on that data.

Which AI models does Perplexity use?

Perplexity can use multiple models from providers such as OpenAI, Anthropic, Google, and its own Sonar model to handle different types of research and analysis.

What is Pro Search in Perplexity?

Pro Search is an advanced mode that asks follow-up questions, analyzes multiple sources, and produces detailed answers tailored to the user’s intent.

What is Deep Research mode?

Deep Research automatically performs many searches, reviews large numbers of sources, and produces a structured report with citations for complex topics.

What are Focus Modes in Perplexity?

Focus Modes filter search results by source type, such as academic papers, social media discussions, YouTube transcripts, or general web content.

Can Perplexity summarize videos or documents?

Yes. It can analyze YouTube transcripts, PDFs, and other files to create summaries, insights, and structured explanations.

Does Perplexity provide citations for its answers?

Yes. Most answers include clickable citations that allow users to verify the original sources of the information.

Is Perplexity AI free to use?

Perplexity offers a free plan with limited advanced searches, while paid plans provide access to more powerful models, higher limits, and advanced research tools.

Leave a Reply

You must be logged in to post a comment.